At Bloomberg, radical mathematician and data scientist Cathy O’Neil writes about the troubling ways that algorithms are being used in criminal investigations, with little oversight or understanding of their accuracy and effectiveness. She also suggests a number of ways that government use of algorithms can be made more transparent. Check out an except from the piece below, or the full text here.

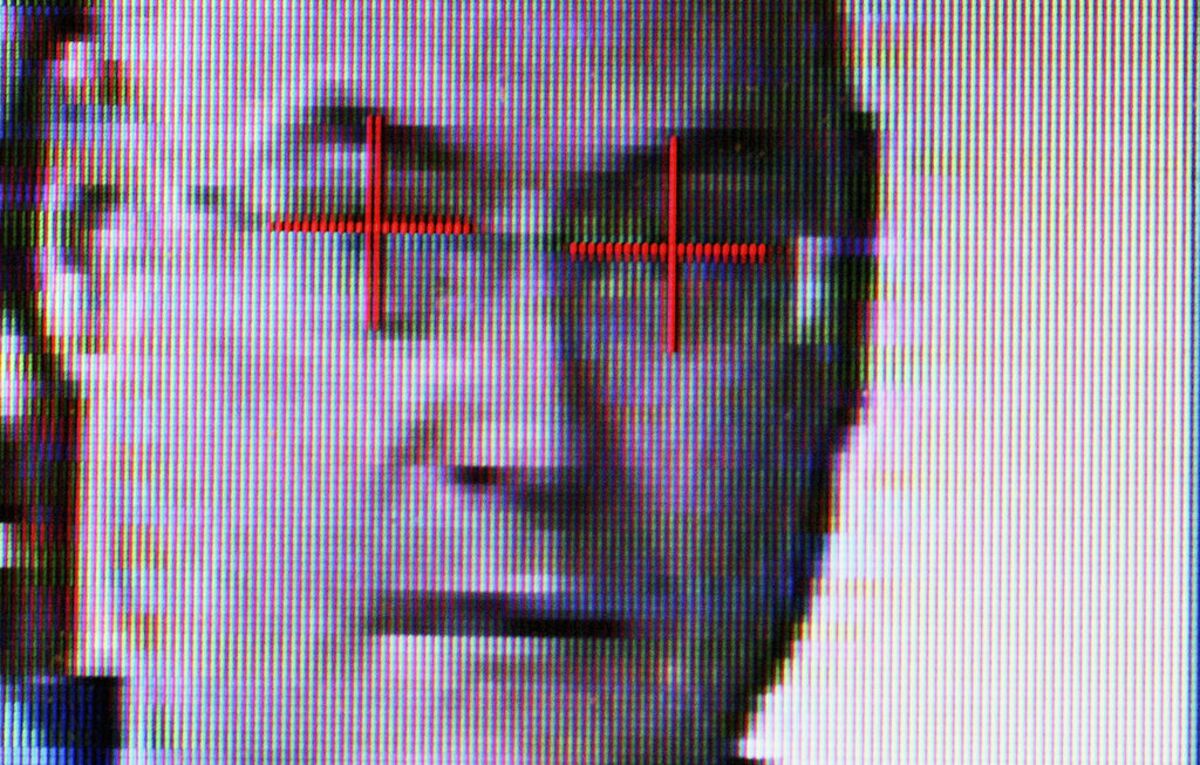

So who will monitor the algorithms, to be sure they’re acting in people’s best interests? Last week’s congressional hearing on the FBI’s use of facial recognition technology for criminal investigations demonstrated just how badly this question needs to be answered. As the Guardian reported, the FBI is gathering and analyzing people’s images without their knowledge, and with little understanding of how reliable the technology really is. The raw results seem to indicate that it’s especially flawed for blacks, whom the system also disproportionately targets.

In short, people are being kept in the dark about how widely artificial intelligence is used, the extent to which it actually affects them and the ways in which it may be flawed. That’s unacceptable. At the very least, some basic information should be made publicly available for any algorithm deemed sufficiently powerful. Here are some ideas on what a minimum standard might require:

Scale. Whose data is collected, how, and why? How reliable are those data? What are the known flaws and omissions?

Impact. How does the algorithm process the data? How are the results of its decisions used?

Accuracy. How often does the algorithm make mistakes – say, by wrongly identifying people as criminals or failing to identify them as criminals? What is the breakdown of errors by race and gender?

Image via Bloomberg.